Introduction

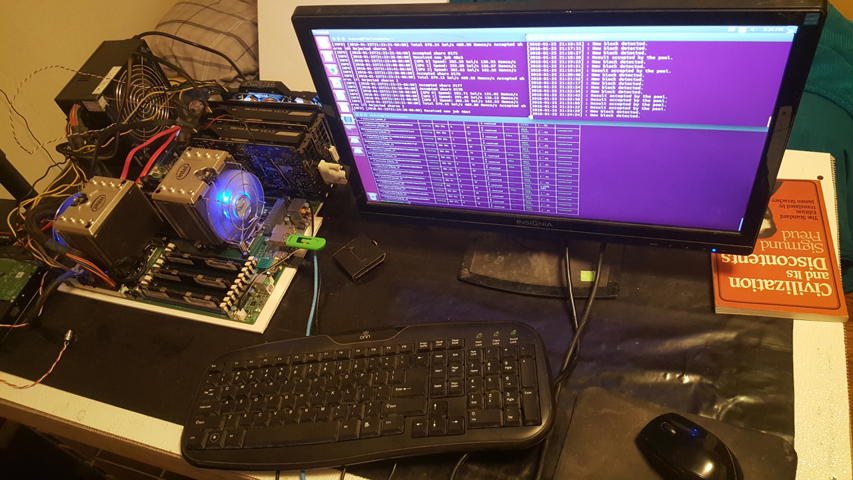

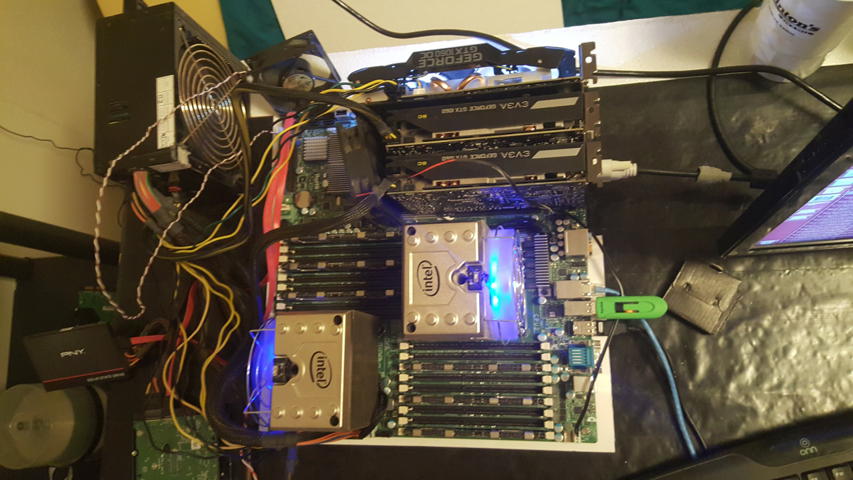

I was getting tired of rigging my old Ubuntu 16.04 desktop-turned server. So I decided to spend a few dollars and buy some old (circa 2012) server hardware.

I use this server mainly for python and web development. It also has some general purpose file storage for backups and such.

Basic Setup

Throughout this page I will refer to two physical computers: my laptop and the server. The server is located at 192.168.1.10 and my laptop at 192.168.1.2. My laptop runs Ubuntu 16.04. For the installation of Ubuntu server 18.04 on my server, I hook up a temporary monitor with a spare GPU. Otherwise, the server runs headless with access only via SSH and only using my key. I set the non-root sudo user as user and the server hostname as host. Change these as you see fit.

Install Ubuntu server as usual by following the on-screen directions. I opted to automatically install security updates and use an encrypted LVM. I also checked to box to install OpenSSH.

The new Ubuntu installer does not include encrypted drives so you will need to grab an alternate image here. This isn’t necessary, I just prefer whole disk encryption. NOTE: you will have to enter the decryption password on every reboot. This is especially difficult for a headless system. Enable encryption only if you are super paranoid and don’t mind being physically present for every reboot.

After the fresh install of with encrypted LVM and OpenSSH:

sudo apt update

sudo apt dist-upgradeEnable firewall:

sudo ufw allow in openSSH

sudo ufw enableSet static IP (replace the interface name and ip addresses for your system):

sudo nano /etc/netplan/01-netcfg.yamlnetwork:

version: 2

renderer: networkd

ethernets:

enp1s0f0:

dhcp4: no

dhcp6: no

addresses: [192.168.1.10/24, ]

gateway4: 192.168.1.1

nameservers:

addresses: [192.168.1.1,8.8.8.8,1.1.1.1]sudo netplan apply

sudo rebootYou will need to log in again to the new ip address. To install the python 3.6 version of anaconda (not miniconda) and set up python environments:

wget https://repo.anaconda.com/archive/Anaconda3-5.2.0-Linux-x86_64.sh

chmod +x Anaconda3-5.2.0-Linux-x86_64.sh

bash Anaconda3-5.2.0-Linux-x86_64.shAnswer the questions as you see fit. I went with the default user installation. Set up py36 and py27 environments:

conda update conda

conda create --name py36 python=3.6

conda create --name py27 python=2.7

source activate py36

pip install --upgrade pip

source activate py27

pip install --upgrade pip

source deactivateI wanted to only allow ssh key logins from my laptop. From my laptop terminal window:

ssh-copy-id [SERVER_USERNAME]@[SERVER_IP_ADDRESS]Now ssh into the server again and modify the ssh config file:

sudo nano /etc/ssh/sshd_configUncomment the line PasswordAuthentication yes and change it to:

PasswordAuthentication noExit and write your changes. Then restart ssh:

sudo systemctl restart sshLog out and log back in to make sure everything works:

exit

ssh [SERVER_USERNAME]@[SERVER_IP_ADDRESS]I also wanted a few services:

sudo apt update

sudo apt install python-dev python3-dev build-essential git

sudo mysql_secure_installationI also activated live-patch kernel updates by following these instructions. Best to be safe with Spectre and Meltdown so close in recent memory.

sudo snap install canonical-livepatch

sudo canonical-livepatch enable [LIVEPATCH-KEY]And reboot just for good measure:

sudo rebootRabbitMQ

The best way to install RabbitMQ is to follow the instructions to add the PPA. For the impatient:

wget -O - 'https://dl.bintray.com/rabbitmq/Keys/rabbitmq-release-signing-key.asc' | sudo apt-key add -

echo "deb https://dl.bintray.com/rabbitmq/debian bionic main" | sudo tee /etc/apt/sources.list.d/bintray.rabbitmq.list

sudo apt update

sudo apt install rabbitmq-server

sudo service rabbitmq-server startIf you are running Uncomplicated Firewall (you should be), you can create an app profile:

sudo nano /etc/ufw/applications.d/rabbitmq-server[RabbitMQ]

title=An open source message broker.

description=A lightweight and easy to deploy message broker from rabbitmq.com.

ports=4369,5671:5672,25672,35672:35682,15672/tcpThen allow it in UFW:

sudo ufw app update RabbitMQ

sudo ufw allow RabbitMQPort 15672/tcp is only needed if you want the web UI:

sudo rabbitmq-plugins enable rabbitmq_management

sudo service rabbitmq-server restartBy default the user guest can only access the web UI on localhost, so we have to create admin user with better security:

sudo rabbitmqctl add_user [USERNAME] [PASSWORD]

sudo rabbitmqctl set_user_tags [USERNAME] administrator

sudo rabbitmqctl set_permissions -p / [USERNAME] ".*" ".*" ".*"It’s best to delete the guest account:

sudo rabbitmqctl delete_user guestYou should now be able to access the UI at http://[SERVER_IP_ADDRESS]:15672 using the newly created account.

Enable rabbitmq at startup:

sudo systemctl enable rabbitmq-serverIt would probably be better to only allow admin to work on localhost and create a separate user for other work, but that’s for another day.

Self-Signed Certificate

Setting up NuPIC

NuPIC) is one of my favorite AIs at the moment. It takes a little bit of work to get it running, however.

Just in case you didn’t do the above setup, here’s what’s needed from a fresh install of ubuntu server 18.04:

sudo apt update

sudo apt upgrade

sudo apt install build-essential python-dev mysql-server git

sudo mysql_secure_installationAssuming you are using anaconda:

conda create --name nupic python=2.7

source activate nupic

pip install --upgrade pip

pip install nupicI also like to have the nupic repo to run tests and such:

git clone https://github.com/numenta/nupic.gitIf you chose to set a root password (you should), then the default config for nupic will not work. You could give nupic admin privileges under the root login or you can create a new user called nupic. After logging into your mysql server as root sudo mysql:

CREATE DATABASE nupic;

CREATE USER 'nupic'@'localhost' IDENTIFIED BY 'nupic';

GRANT ALL PRIVILEGES ON nupic.* TO 'nupic'@'localhost';Repetitive I know. Set the username, password, and database to whatever you want. Now we must update the nupic config to match the mysql login we just created:

nano ~/anaconda3/envs/nupic/lib/python2.7/site-packages/nupic-1.0.6.dev0-py2.7.egg/nupic/support/nupic-default.xmlChange the nupic.cluster.database.user variable to your new mysql user:

<property>

<name>nupic.cluster.database.user</name>

<value>nupic</value>

<description>Username for the MySQL database server </description>

</property>And change the nupic.cluster.database.passwd variable to your new password:

<property>

<name>nupic.cluster.database.passwd</name>

<value>nupic</value>

<description>Password for the MySQL database server </description>

</property>And that’s it. You should have a running nupic setup. You can run the unit tests:

cd ~/nupic/

py.test tests/unitWebDAV

My Zotero account far exceeds their built in cloud file storage. I could pay Zotero for extra storage (which I hope to do when I have money), or I can set up a WebDAV server myself. I followed this instruction set from DigitalOcean. For the lazy: Install the necessary components:

sudo apt install apache2 apache2-utils

sudo a2enmod dav

sudo a2enmod dav_fs

sudo a2enmod auth_digestOpen ports in UFW:

sudo ufw allow in apacheCreate webdav directory and update permission:

sudo mkdir /var/www/webdav

sudo chown -R www-data:www-data /var/www/Create a webdav user called zotero and update permissions (enter a good password at the prompt):

sudo htdigest -c /etc/apache2/users.password webdav zotero

sudo chown www-data:www-data /etc/apache2/users.passwordModify the apache2 config at sudo nano /etc/apache2/sites-available/000-default.conf to serve the webdav folder by adding this to the <VirtualHost> section:

# WebDAV

Alias /webdav /var/www/webdav

<Directory /var/www/webdav/>

DAV On

AuthType Digest

AuthName "webdav"

AuthUserFile /etc/apache2/users.password

Require valid-user

</Directory>As well as

DavLockDB /var/www/DavLockto the first line of the file.

Zotero requires a folder called zotero in the webdav directory. Also, a Zotero library can get pretty large, so I’m creating a symlink to a non-system disk mounted at /media/2TB/ with more space:

sudo ln -s /media/2TB/media/zotero /var/www/webdav/zoteroYou probably could make the changes in the apache2 config file, but that would require different permission on my disk, which I don’t want to do.

Restart to update all the changes:

sudo service apache2 restartYou should be able to access your WebDAV at http://[SERVER_IP_ADDRESS]/webdav/ with the username and password set earlier. Zotero Settings: Edit -> Preferences -> Sync -> File Sync -> Sync Attachment files in My Library using WebDAV

Later I will update WebDAV to work over SSL, but this isn’t strictly necessary. I don’t use the WebDAV for anything other than zotero and all that info is public anyway. Your webdav password will be sent in clear-text so don’t reuse a password (you shouldn’t be doing that anyway).

Plex Media Server

I followed these instructions pretty much to the letter, so I won’t repost them here. In order to play some of the files I also installed vlc sudo apt install vlc. It was easier than tracking down all the necessary codecs one-by-one.

MongoDB

I follow these instructions almost verbatim. The only change I made was to add a profile for UFW sudo nano /etc/ufw/applications.d/mongod:

[mongodb]

title=NoSQL database program

description=A free and open-source cross-platform document-oriented database program.

ports=27017/tcpsudo ufw allow in mongodbFactorio Headless Server

Download a copy of the Factorio headless image.

Torrent Server

I want to be able to share some large files. In all likelihood, only a few downloads will ever happen, so I think a torrent server would be appropriate. Unfortunately, my favorite torrent client, Transmission, does not have a built in tracker, so I am forced to use qBittorrent. My server is headless, so I’m following these installation instructions for the basic setup. Then I need it to act as a tracker. Unfortunately, I couldn’t get this to work even after several hours of fiddling. I resorted to using public trackers obtained here. Since I’m apparently not smart enough to get my server to act as a tracker, I have elected to scrap the whole thing and use Transmission. One day I might return: TODO: Work on public torrent tracker system for server The main use of my torrent services at the moment is mirroring data for [academictorrents.com][]. So far I have almost dozens of supported torrents from them clocking in at about 600GB. Check out my lasrswrd profile. I generally selected torrents to host by looking for fewer than ten mirrors and only one or two in the U.S.. I also looked at the number of downloads and upload date: a preference for recent and popular torrents. I also download things like OS images via the server so they can be uploaded in kind.

Dynamic DNS

I’ve always had good luck with [noip.com][]. Normally I would install their dynamic update client on the server, but I have found a better way. Tomato has a setting for dynamic updates. Its as simple as adding your login info and hostname and clicking save. Now my server can be reached at flaminglasersword.ddns.net.

KVM

Tor Bridge

I would like to help the TOR project in any way that I can. I don’t have a fast internet connection (circa 30Mb down/5Mb up), so I can’t run a full relay. The only thing I can do at the moment is run an obfuscated bridge. The first step is to create a new KVM to host the tor service. I’m using a copy of Ubuntu 16.04 for stability and compatibility. A TOR bridge doesn’t need all that much in terms of processing and memory. The limiting factor is the bandwidth. I may upgrade the RAM in the future. First some prep for the host:

sudo ufw allow in Apache\ SecureA copy of Ubuntu Server was downloaded using the torrent link and copied to /var/lib/libvirt/images/. Create the new KVM:

sudo virt-install --name Ubuntu-16.04 --ram=512 --vcpus=1 --cpu host --hvm --disk path=/var/lib/libvirt/images/ubuntu-16.04-vm1,size=8 --cdrom /var/lib/libvirt/boot/ubuntu-16.04.5-server-amd64.iso --graphics vnchttps://www.torproject.org/docs/debian.html.en#ubuntu

sudo apt install gnupg2Standalone Slurm

Instructions derived from here and here

sudo apt install libhdf5-dev freeipmi-common libdbi1 libfreeipmi16 libhwloc-plugins libhwloc5 libipmimonitoring5a liblua5.1-0 libmysqlclient20 librrd8 libslurmdb32 munge mysql-server cgroup-bin

wget https://download.schedmd.com/slurm/slurm-18.08.3.tar.bz2

tar -xvf slurm-18.08.3.tar.bz2

cd slurm-18.08.3

./configure --prefix=/etc/init.d/ --sysconfdir=/etc/slurm-llnl/

make

make install

sudo /usr/sbin/create-munge-keyLog into your mysql server as root.

create database slurm_acct_db;

create user 'slurm'@'localhost';

set password for 'slurm'@'localhost' = 'slurm';

grant usage on *.* to 'slurm'@'localhost';

grant all privileges on slurm_acct_db.* to 'slurm'@'localhost';

flush privileges;Use whatever password you think appropriate.

Make the config files.

sudo mkdir /etc/slurm-llnl

sudo nano /etc/slurm-llnl/cgroup.confCgroupAutomount=yes

ConstrainCores=no

ConstrainRAMSpace=nosudo nano /etc/slurm-llnl/slurm.confControlMachine=host

AuthType=auth/none

CryptoType=crypto/munge

MpiDefault=none

ProctrackType=proctrack/cgroup

ReturnToService=1

SlurmctldPidFile=/var/run/slurm-llnl/slurmctld.pid

SlurmctldPort=6817

SlurmdPidFile=/var/run/slurm-llnl/slurmd.pid

SlurmdPort=6818

SlurmdSpoolDir=/var/spool/slurmd

SlurmUser=slurm

SlurmdUser=slurm

StateSaveLocation=/var/spool/slurmd

SwitchType=switch/none

TaskPlugin=task/none

InactiveLimit=0

KillWait=30

MinJobAge=300

SlurmctldTimeout=120

SlurmdTimeout=300

Waittime=0

FastSchedule=1

SchedulerType=sched/backfill

SelectType=select/linear

AccountingStorageType=accounting_storage/none

AccountingStoreJobComment=YES

ClusterName=cluster

JobCompType=jobcomp/none

JobAcctGatherFrequency=30

JobAcctGatherType=jobacct_gather/none

SlurmctldDebug=5

SlurmctldLogFile=/var/log/slurm-llnl/slurmctld.log

SlurmdDebug=3

NodeName=host NodeAddr=xxx.xxx.xxx.xxx.xx CPUs=24 CoresPerSocket=6 ThreadsPerCore=2 State=UNKNOWN RealMemory=24670964

PartitionName=debug Nodes=host Default=YES MaxTime=INFINITE State=UPChange NodeName= and Nodes= to match whatever you put in ControlMachine=. Change CPUs=, CoresPerSocket=, ThreadsPerCore=, RealMemory= to match your system.

Use bash: free to determine your RealMemory, and lscpu to determine CPU info.

sudo nano /etc/slurm-llnl/slurmdb.conf# slurmDBD info

DbdAddr=localhost

DbdHost=localhost

SlurmUser=slurm

DebugLevel=4

PidFile=/var/run/slurm-llnl/slurmdbd.pid

# Database info

StorageType=accounting_storage/mysql

StoragePass=slurm

StorageUser=slurm

StorageLoc=slurm_acct_dbsudo mkdir /var/log/slurm-llnl /var/spool/slurmd /var/run/slurm-llnlChange permissions:

sudo chown -R slurm:slurm /etc/slurm-llnl/ /var/log/slurm-llnl/ /var/run/slurm-llnl

sudo chmod -R 664 /etc/slurm-llnl/slurm.conf /etc/slurm-llnl/slurmdb.conf /etc/slurm-llnl/cgroup.confNote: If you have trouble with the make commands, try sudo make instead.